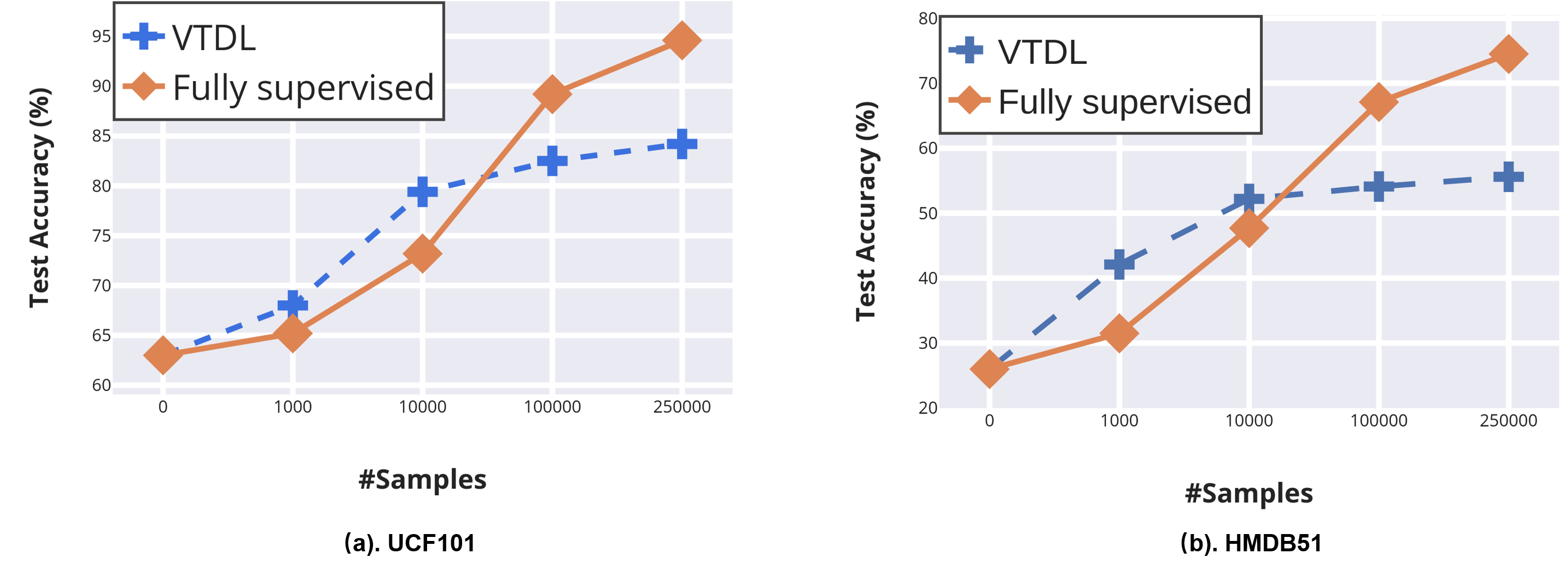

PyTorch Tabular aims to make Deep Learning with Tabular data easy and accessible. SAINT consistently improves performance over previous deep learning methods, and it even outperforms gradient boosting methods, including XGBoost, CatBoost, and LightGBM, on average over a variety of benchmark tasks. training with the Self-Supervised learning method DINO. Prominent SSL methods, such as Masked Language Modeling (MLM) (Devlin et al. We also study a new contrastive self-supervised pre-training method for use when labels are scarce. Self-supervised learning (SSL) aimed at harnessing unlabelled data through learning its structure and invariances has accumulated a large body of works over the last few years. Our method, SAINT, performs attention over both rows and columns, and it includes an enhanced embedding method. We devise a hybrid deep learning approach to solving tabular data problems.

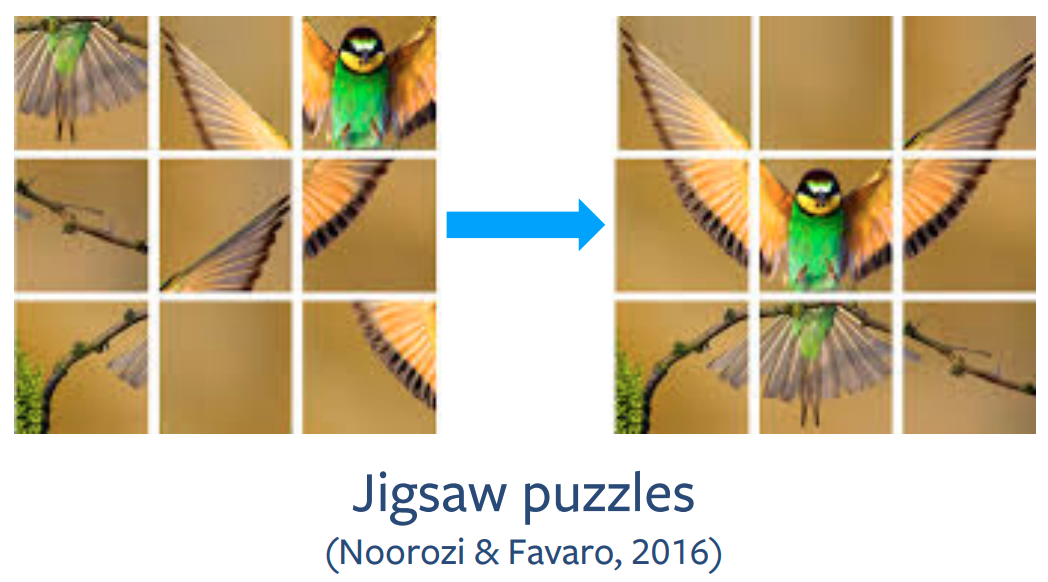

However, recent deep learning methods have achieved a degree of performance competitive with popular techniques. Awesome Self-supervised Learning for Tabular Data. Classical approaches to solving tabular problems, such as gradient boosting and random forests, are widely used by practitioners. Advances in Neural Information Processing Systems, 33, 2020. To the best of our knowledge, there is no implementation of a contrastive self-supervised frame-work that incorporates both images and tabular data, which we aim to address with this work. We introduce SAINT, the Self-Attention and INtersample attention Transformer, a specialized ar-chitecture for tabular data. Vime: Extending the success of self-and semi-supervised learning to tabular domain. erature on generative self-supervised tabular and imaging models 3 34 exists, it is limited in scope, using only two or four clinical features. image and text), and representation learning. In this way, a vast number of training instances with supervision can be generated from the unlabeled data to train a model for the pretext task. Download a PDF of the paper titled SAINT: Improved Neural Networks for Tabular Data via Row Attention and Contrastive Pre-Training, by Gowthami Somepalli and 4 other authors Download PDF Abstract:Tabular data underpins numerous high-impact applications of machine learning from fraud detection to genomics and healthcare. learning models for tabular data, we lack the ability to exploit compositionality, end-to-end multi-task models, fusion with multiple modalities (e.g. Self-supervised learning leverages a carefully defined pretext task for supervised feature learning where the supervision is automatically generated from the data itself.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed